So Many Agents, So Little Time

How to steer your business through the hype cycle without crashing

Cutting Through the Noise

2026 planning is tangled up with AI hype, and leaders need clarity before committing.

It’s fall 2025 and schools are back in session, the markets are settling into their last quarter rhythm, and you are working on your strategic plan for 2026. You know artificial intelligence features somewhere in that plan. The pressure is there in every board conversation and every vendor pitch. You also know it will reshape how your operations run, how your people work, and how your competitors invest. What is harder to see from here is how it will change your business model itself.

The conversation about AI has become louder, faster, and harder to ignore. At every conference, on every newsfeed, someone is promising that agents will transform entire industries. Yet the practical questions remain unanswered. When will the technology be reliable enough to use at scale? Which of the thousands of startups will still exist when your plan is halfway through execution? Where should you invest now, and where should you hold back?

This article looks ahead over the next 18 months. Not as a prediction of every twist and turn, but as a guide to help you make decisions with clarity. We will cut through the noise of the current hype cycle, examine where the technology really is, and outline a timeline for when enterprises like yours can expect stability, governance, and standards. Most importantly, we will look at what you can do now to prepare your business without wasting money chasing illusions.

The goal is simple: to help you lead with foresight, not follow in fear. The question you should be asking yourself and your leadership team is this: Are we building our plan around today’s hype, or tomorrow’s reality?

The Hype Tsunami

Capital, startups, and constant model releases have created frenzy, not stability.

The last two years have seen a surge of investment in artificial intelligence unlike anything in recent memory. By some counts, there are more than 50,000 generative AI startups worldwide. Add to that a smaller but rapidly growing cohort of agentic AI startups, and the landscape begins to look unsustainable. There is simply not enough funding or market demand to support so many companies, especially when most are still experimenting with the same underlying models.

Meanwhile, the large model providers continue their relentless pace. OpenAI, Anthropic, Google, and Meta release each new generation with great fanfare, promising more power, longer context windows, and fewer mistakes. Research and development is moving quickly, and some of the advances are remarkable. The tools are capable of impressive results in the right conditions. But for enterprises making strategic plans, this pace of change creates as much uncertainty as opportunity. Few companies have mastered the current models, yet already the market waits impatiently for the next one.

The scale of investment tells its own story. Spending on AI infrastructure has already exceeded the levels poured into telecom and internet infrastructure during the dot-com boom, and continues to climb. Analysts have noted that this investment is so significant it has become a form of private-sector stimulus, contributing more to U.S. economic growth in recent quarters than consumer spending itself.

“Capex spending for AI contributed more to growth in the U.S. economy in the past two quarters than all of consumer spending.” — Neil Dutta, Renaissance Macro Research

At the same time, observers within the industry are beginning to question the foundations. Behind closed doors, many founders and investors acknowledge that today’s large language models have structural limitations that more data and more compute will not fix. They know the architecture has plateaued, yet the hype continues to drive capital, headlines, and careers.

“Hallucinations aren’t edge cases. They’re the ceiling. And that ceiling is structural.” — Srini Pagidyala, The $10T Illusion

For enterprise leaders, this is the heart of the dilemma. On one hand, you are witnessing an extraordinary wave of innovation, supported by historic levels of capital. On the other, you are operating in an environment where the noise of hype drowns out sober assessment of what is actually possible. This is not the first time business leaders have faced such a moment. What makes today different is the scale of the bet being placed, and the speed at which it is unfolding.

Not There Yet

Today’s agents can’t meet enterprise standards for reliability, security, or compliance.

If the hype suggests inevitability, the reality is more complicated. Enterprises need technology that is dependable, secure, and auditable. Today’s AI systems are not yet at that standard.

Generative models can produce fluent text, but they still hallucinate facts, misinterpret instructions, and fail unpredictably. Even the leading platforms acknowledge that reliability at scale remains elusive. The excitement around “agents” adds another layer of risk. Early tests show that autonomous agents can plan and act, but they are brittle. They loop, they get stuck, and they make decisions that cannot be explained after the fact. For businesses that spend millions ensuring processes are tested, repeatable, and compliant, this is not a minor issue.

“AI agents are not yet enterprise-ready. They lack the monitoring, observability, and guardrails that regulated industries require.” — Leon Furze, Everything I’ve Learned so far About OpenAI’s Agents

Security compounds the problem. Traditional software lives in controlled environments, with clear perimeters. Agentic AI does not. Agents move across systems, call external services, and exchange information with other agents. Each of those interactions creates a potential attack surface.

Researchers are already finding ways to exploit these vulnerabilities. A recent demonstration showed how attackers could hijack an enterprise agent built in Microsoft’s Copilot Studio, redirecting its outputs and actions without detection. Others have described “AIjacking,” where a malicious actor takes over an autonomous workflow for financial or reputational gain.

“Agents introduce new attack surfaces that current enterprise security models are not designed to protect.” — Stuart Winter-Tear, summarizing a survey on AgentOps

None of this means enterprises should dismiss the technology. But it does mean that adoption will not happen on the same timeline as consumer applications. Businesses operate in regulated environments with obligations to shareholders, regulators, and customers. Until the tools mature, the risks outweigh the rewards.

The consequence is straightforward: agentic AI will not enter enterprise production environments at scale until governance, monitoring, and security standards catch up. That process is already underway, but it will take time.

This is why the next 18 months matter. Enterprises are watching a market filled with thousands of vendors, billions of dollars in investment, and technology that is still unreliable and insecure. History tells us that such moments do not last. What follows is a period of consolidation, standard-setting, and governance. The shape of that transition — and how you navigate it — is the focus of the next section.

Correction Coming

Most startups will disappear, while standards and governance shape what survives.

Every technology cycle begins with abundance. Too many startups, too much capital, and too little differentiation. The last wave was “Big Data.” Between 2012 and 2016, hundreds of companies emerged around Hadoop, Spark, and data lakes. By 2022, only a handful remained—Databricks, Snowflake, Confluent. Everyone else had either been acquired, folded, or disappeared.

Agentic AI is on the same path. Today’s landscape includes tens of thousands of generative AI startups and hundreds of agentic AI experiments. No market can sustain that number of vendors. The pattern will repeat: infrastructure gets commoditized by the big platforms, open-source projects mature, and customers gravitate toward the ecosystems that reduce complexity.

The timeline for this shakeout is already visible. Through late 2025, hype will continue to attract funding. By mid-2026, many of the smaller players will run out of capital. By 2027, consolidation will leave only a few ecosystems standing. In the meantime, what will stabilize adoption are not the vendors themselves, but the standards that allow systems to interoperate and be secured.

“Most agentic AI propositions lack significant value or return on investment, as current models do not have the maturity and agency to autonomously achieve complex business goals or follow nuanced instructions over time,” — Anushree Verma, Senior Director Analyst at Gartner

Standards matter because they create trust. They define how agents communicate, how they authenticate, how they discover each other, and how their actions can be monitored. Without them, enterprises cannot integrate AI into regulated processes. With them, adoption becomes possible.

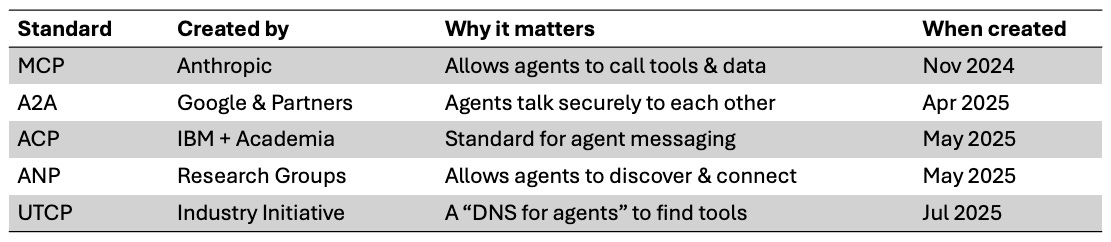

Here are the five most important standards to track today:

There are more emerging efforts—some academic, some industry-driven—but most will not last. For those who want the full picture, I’ve included an extended list of standards in the appendix. The point is not to memorize the acronyms but to recognize that until these standards mature, enterprise adoption will remain patchy and experimental.

Governance adds another layer. Regulations such as the EU AI Act are only beginning to grapple with agentic systems, and many of the hardest questions—liability, audit trails, decision explainability—remain unresolved. Startups chasing product-market fit are unlikely to prioritize these issues. That means enterprises must. Governance cannot be bolted on later. It has to be part of the evaluation from the start.

The timeline is therefore not just about hype and funding. It is about when the technology, the standards, and the governance align to make adoption safe and sustainable. Until then, the prudent course is to experiment, prepare, and watch the market consolidate.

Get Ready Now

The next 18 months are about preparation, not procurement—get your house in order.

The temptation in moments like this is to pick winners early. Vendors will push hard to persuade you that now is the time to commit. History suggests otherwise. Most of today’s startups will not survive the next two years.

Analysts estimate that more than 40 percent of agentic AI projects will be scrapped before reaching production, either because the technology fails to mature or because governance requirements cannot be met. That statistic should not discourage experimentation, but it should change how you frame your investments. The right strategy is to treat the next 18 months as a preparation window, not a procurement race.

My guess at a likely timeline looks like this:

Q3 2025: Hype continues, with heavy vendor marketing and rapid startup launches.

Q4 2025: Early signs of cooling as enterprises hesitate to commit.

Q1 2026: First wave of failures as many startups run out of funding.

Q2 2026: Standards like MCP and A2A begin to stabilize, enabling more consistent adoption.

Q3 2026: Early majority enterprises begin structured pilots tied to governance frameworks.

Q1 2027: First production deployments of agentic systems in regulated industries.

For leadership teams, the message is clear. Do not overcommit to today’s vendors. Build a portfolio of small, bounded experiments to test workflows and identify opportunities. Invest in the fundamentals: clean data, strong process management, security readiness. Follow the standards work so you know when the market is stabilizing. And most importantly, build organizational knowledge. The companies that learn how to work with these tools now will move faster when the technology becomes reliable.

It’s not about doing nothing. It’s about preparing wisely. What becomes possible with agentic AI will go far beyond tinkering with workflows. It will change how you interact with customers and suppliers. It will reshape your operational model. In some industries, it may even rewrite the rules of competition. These are not technology evaluations. They are conversations your leadership team should be having now about strategy, risk, and positioning. The question is whether you will be ready when that moment arrives, or whether you will be left adapting on someone else’s terms.

Let’s Talk About It

This is not a technology choice; it’s a strategy discussion for the boardroom.

Every leadership team faces the same dilemma when a new technology wave arrives. Move too early and risk wasting money on tools that will not last. Move too late and risk being locked out of new markets or falling behind competitors who took the first steps. With agentic AI, both risks are real.

The right way to approach the next 18 months is not to chase vendors or bet on hype, but to prepare your organization for the changes to come. That means strengthening your data foundations, tightening your processes, and building the governance that will be required once standards take hold. It also means creating space for experimentation, so your teams gain the fluency they will need when adoption becomes practical.

The bigger point is that this is not just a technology question. It is a leadership conversation. Agentic AI will touch how you interact with customers, how you work with suppliers, and how you organize your operations. In some cases, it may change how your industry operates. Those are strategic choices, not procurement decisions. They belong on the agenda of your executive team.

If you want a structured discussion about how this might apply to your business, I offer 30-minute exploratory sessions with leadership teams. There is no template, no hard sell, and no obligation. The goal is to help you sharpen the questions you should be asking and to separate what matters from what can wait.

The next 18 months will not decide the future of agentic AI. But they will decide whether your organization is prepared to act with foresight or forced to adapt on someone else’s terms. The choice, as always, belongs to leadership.

Appendix

For readers who want the detail, here is the broader set of standards currently being proposed or tested around agentic AI. Not all of these will survive, but together they show the range of efforts to make agents interoperable, secure, and trustworthy.